There is a quiet contradiction at the heart of the AI revolution. The same companies racing to build the most powerful AI systems, systems they promise will solve climate change, accelerate clean energy and redesign how humanity uses resources are simultaneously driving one of the fastest-growing sources of carbon emissions on the planet.

This is not a niche concern reserved for climate scientists or environmental activists. It is an urgent and compounding engineering, policy and business problem that is already manifesting in real, measurable ways: strained electricity grids, rising consumer energy bills, depleted water supplies in drought-prone regions and a widening gap between the sustainability pledges Big Tech makes in public and the emissions data buried in their annual reports.

The conversation around AI’s environmental impact tends to oscillate between two unhelpful extremes. One camp dismisses the concern entirely, pointing out that a single ChatGPT query uses less electricity than boiling a kettle. The other paints a picture of AI servers melting the planet. Neither framing is honest. The truth is more structural, more systemic and ultimately more urgent than either camp acknowledges.

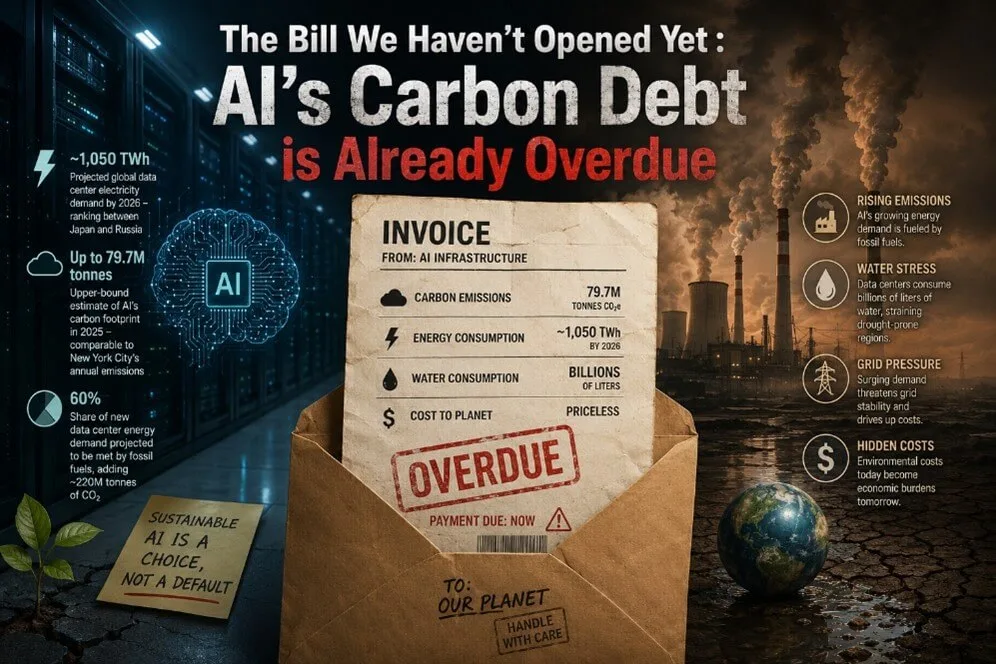

~1,050 TWh

Projected global data center electricity demand by 2026, ranking between Japan and Russia, surges rapidly

79.7M tonnes

Upper-bound estimate of AI’s carbon footprint in 2025 — comparable to New York’s annual emissions

60%

Share of new data center energy demand projected to be met by fossil fuels, adding ~220M tonnes of CO₂

The Cornell research roadmap is worth engaging with carefully, because it refuses the comfort of both false optimism and fatalism. The current trajectory, if uninterrupted, leads to an AI infrastructure whose carbon and water footprint will make the industry’s net-zero commitments functionally impossible to meet. But the same analysis shows that a combination of three practical, achievable interventions, smarter facility siting, accelerated grid decarbonization in AI-heavy regions and operational efficiency improvements including advanced liquid cooling and better server utilization could cut carbon impact by approximately 73% and water consumption by approximately 86% relative to worst-case scenarios. The tools exist. The technology is ready. What is missing is coordination.

At the policy level, the most impactful near-term intervention may be mandatory environmental disclosure for AI workloads specifically, with standardized metrics that allow independent verification. Currently, the opacity of corporate reporting makes it essentially impossible to hold any individual company accountable for the emissions generated by specific AI products. Without that accountability, there is no market signal to reward efficiency and penalize waste.

At the engineering level, the shift towards smaller, more specialized models for tasks that do not require the full capability of a frontier system offers one of the most promising near-term efficiency gains. Running a 7-billion parameter model for tasks that genuinely require a 7-billion parameter model, rather than defaulting to a 200-billion parameter model for every query, reduces energy consumption per response dramatically without any sacrifice in output quality for the relevant task.

The real test of whether artificial intelligence is genuinely useful to humanity may not be whether it can write a sonnet, pass a bar exam or generate a photorealistic image. It may be whether the industry that builds it can apply the same relentless optimization instincts it uses to tune a model the obsessive pursuit of efficiency, the willingness to discard comfortable defaults, the commitment to measuring what actually matters to the problem of building one without burning the world it was designed to improve.

We are still in the build-out moment. The infrastructure choices being made right now, in boardrooms and planning committees and grid negotiations, will shape the environmental profile of AI for the next two to three decades. That window is open. It will not stay open indefinitely.

Hasitha Dissanayake – Solutions Engineer